This analysis explored how encoding modalities (Visual and Visuohaptic) influence user performance in a delayed match-to-sample task involving tomographic slice excepts. The focus was on comparing error rates and response times between the two conditions to assess the effects of multisensory integration on task efficiency.

Data Cleaning and Preprocessing

Participants with overall performance below 60% (fewer than 58 correct responses out of 96 trials) were excluded from analysis. This threshold ensured that participants performed significantly above chance (p < 0.05) based on a binomial probability distribution. The final dataset included 18 participants.

Statistical Analysis Framework

- Independent Variable: Encoding Modality (Visual, Visuohaptic).

- Dependent Variables: Error Rate and Response Time.

- Shapiro-Wilk Test: Assessed normality of both error rates and response times.

- Paired-Sample t-Tests: Applied to compare conditions for normally distributed data.

- Effect Size Reporting: Cohen’s ddd provided the magnitude of observed effects.

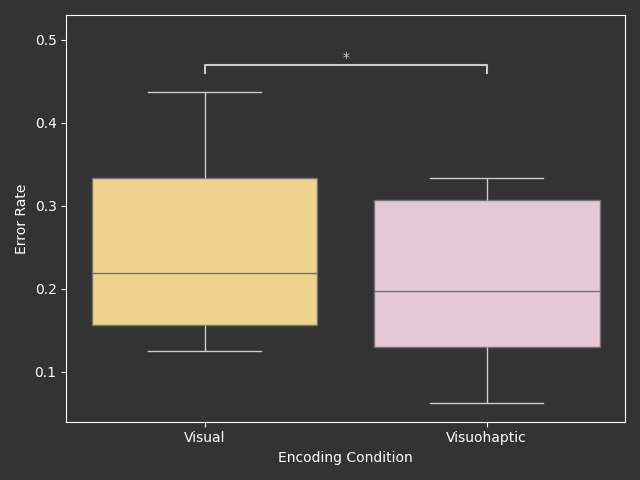

A paired-sample t-test revealed a significant difference in error rates between visual and visuohaptic encoding (t(17) = 4.92, p < 0.001, d = 0.48). The visuohaptic condition resulted in a lower error rate (̄x = 0.20, σ = 0.10) compared to the visual condition (̄x = 0.25, σ = 0.10).

A paired-sample t-test identified a significant difference in response times (t(17)=2.31, p=0.034, d=0.13). The visuohaptic condition produced shorter response times (xˉ=13.40 σ=4.07) compared to the visual condition (x=13.94 σ=4.20).

The complete analysis, including preprocessing, statistical modeling, and visualizations, is documented in a public GitHub repository at https://github.com/lsrodri/VHMatchMorph.

This data analysis was included in my ACM SIGGRAPH VRCAI 24 article Evaluating Visuohaptic Integration on Memory Retention of Morphological Tomographic Images.